Ppg Foundation Models

PPG foundation models are large pre-trained neural networks that learn general-purpose representations from massive photoplethysmography datasets, the...

PPG Foundation Models: The Complete Guide to Every Pre-Trained Model for Photoplethysmography

PPG foundation models are large pre-trained neural networks that learn general-purpose representations from massive photoplethysmography datasets, then transfer those representations to downstream tasks like heart rate estimation, atrial fibrillation detection, blood pressure estimation, stress classification, and sleep analysis. In practice, they mark a shift away from training one model per task toward building reusable PPG backbones that can be fine-tuned across clinical and wearable applications with much less labeled data.

That shift matters because PPG is now everywhere, in hospital monitors, rings, watches, patches, and phones, but labeled physiological datasets are still scarce, fragmented, and expensive to curate. Foundation models change the economics of the field. Instead of collecting a new supervised dataset every time you want to solve a new problem, you can start from a model that has already seen tens of thousands of hours, or in some cases millions of hours, of pulse wave data.

If you want the short version, here it is: as of 2026, PaPaGei, Pulse-PPG, GPT-PPG, AnyPPG, SiamQuality, Apple's large-scale wearable pretraining framework, and broader biosignal models such as BIOT and MOMENT define the current landscape of PPG foundation models. They differ sharply in data source, pretraining objective, openness, and real-world robustness. The field is moving fast, but it is finally mature enough to compare concrete models rather than just talk about the idea of a "PPG foundation model."

For background on pretraining mechanics, see our guide to self-supervised learning for PPG, our article on public PPG datasets and benchmarks, and our review of the end-to-end PPG machine learning pipeline.

What makes a PPG foundation model different from a traditional PPG deep learning model?

A traditional PPG deep learning model is usually trained end-to-end for one narrow task on one labeled dataset. A heart rate model is trained for heart rate. A blood pressure model is trained for blood pressure. A stress model is trained for stress. Performance often looks strong inside the training distribution, then drops when the device changes, the population changes, or motion artifacts get worse.

A PPG foundation model aims for something broader. It is pre-trained on large unlabeled or weakly labeled corpora, usually across many subjects and recording contexts, and learns a reusable representation of pulse morphology, rhythm, noise structure, vascular dynamics, and subject variation. Downstream tasks become lightweight heads or fine-tuning jobs on top of that shared encoder.

In practical terms, most models in this category have four features:

- Scale: thousands to millions of recording hours.

- Transferability: evaluated across multiple downstream tasks, not just one.

- Pretraining before supervision: self-supervised, contrastive, generative, masked reconstruction, or multimodal alignment.

- Representation reuse: the encoder is the product, not just the final classifier.

That does not mean every large PPG model qualifies. Some are simply very large supervised models trained for a single endpoint. Others are generic time-series models that happen to include PPG somewhere in the corpus. The best current PPG foundation models are the ones explicitly designed to learn transferable physiology from PPG itself.

Timeline: how PPG foundation models evolved from 2023 to 2025

The timeline is short, which is one reason this space is still easy to map.

| Year | Milestone | Why it mattered |

|---|---|---|

| 2023 | BIOT and Apple's large-scale wearable SSL work appear | Showed that biosignal foundation models and wearable-scale self-supervised pretraining were viable |

| 2024 | SiamQuality, PaPaGei, MOMENT | PPG-specific foundation modeling became explicit, with stronger transfer benchmarks |

| 2025 | Pulse-PPG, GPT-PPG, multimodal PPG supervision models | Shift toward robustness in real-world wearables, generative pretraining, and multimodal physiological guidance |

| 2025 to 2026 | AnyPPG, newer wavelet and multimodal variants | Field expands from universal encoders toward physiology-guided and phenome-scale health profiling |

The early phase was mostly proof of concept. Researchers asked whether self-supervised pretraining on physiological time series could work at all. By 2024 and 2025, the question changed. It became: which pretraining signal is best for PPG, contrastive morphology, masked reconstruction, autoregressive generation, or supervision from ECG and other synchronized modalities?

Comparison table: every major PPG foundation model at a glance

| Model | Year | Training Data Scale | Architecture | Open Source? |

|---|---|---|---|---|

| PaPaGei | 2025 | 57,641 h / 13,517 subjects | ResNet CNN with morphology-aware SSL | Yes |

| Pulse-PPG | 2025 | ~55,000 h / 120 participants (field) | ResNet encoder, noisy field data | Yes |

| GPT-PPG | 2025 | ~2.2M h / 200M+ samples | Decoder-only GPT (19M-1B params) | Research code |

| AnyPPG | 2025 | 109,909 h / 58,796 subjects | Dual-branch with ECG alignment | Yes |

| SiamQuality | 2024 | 600,000+ h / 36M paired segments | ResNet CNN | Partial |

| Apple wearable | 2023 | ~141K participants / ~3 years | EfficientNet-style 1D CNN | No |

| BIOT | 2023 | Multi-biosignal cross-dataset | Unified tokenizer + transformer | Yes |

| MOMENT | 2024 | General time-series pile | Transformer encoder | Yes |

| Multimodal PPG FM | 2025 | ICU with paired ECG + respiration | PPG encoder, multimodal supervision | Research-stage |

| Wavelet MMR | 2025 | ~17M segments / ~32K users | Transformer encoder | Research-stage |

| PPG-Distill | 2025 | Distilled from teacher FMs | Compressed student models | Yes |

PaPaGei, the first open PPG foundation model

Paper: Pillai et al., "PaPaGei: Open Foundation Models for Optical Physiological Signals." ICLR 2025. arXiv: 2410.20542, DOI: 10.48550/arXiv.2410.20542, OpenReview: https://openreview.net/forum?id=kYwTmlq6Vn.

PaPaGei is the model that made "PPG foundation models" a concrete category rather than a speculative research direction. The authors are Guanghan Ding, Shivam Pillai, Dimitris Spathis, Fahim Kawsar, Mohammad Malekzadeh, and collaborators, with affiliations including Nokia Bell Labs, Dartmouth College, the University of Cambridge, and the University of Glasgow [1].

What made PaPaGei important was not just scale. It was openness and design intent. The model was pre-trained on 57,641 hours of single-channel PPG from 13,517 participants, pooled from three public sources: VitalDB (17,355 h), MIMIC-III (19,990 h), and MESA (20,296 h), for a total of 20,751,206 10-second segments [1]. That instantly gave the community a public baseline for large-scale PPG pretraining.

Architecturally, PaPaGei uses a ResNet-style CNN encoder with 18 convolutional blocks and a 512-dimensional projection layer. That choice was deliberate. The authors favored strong local morphology extraction over attention-heavy sequence modeling, then built the foundation-model behavior into the pretraining objective rather than the backbone alone.

The pretraining method is where PaPaGei stands out. Instead of using generic augment-and-contrast alone, the paper explicitly tries to encode PPG morphology across individuals. The result is a self-supervised framework that treats morphology as signal, not nuisance. That matters for PPG more than for many other time series because waveform shape carries vascular, hemodynamic, and subject-specific information beyond beat timing.

PaPaGei was evaluated on 20 downstream tasks across 10 datasets, including regression and classification settings such as heart rate, activity, blood pressure, stress-related outcomes, and sleep-related tasks [1]. The paper's broad result is simple: the pretrained encoder transfers well, usually better than task-specific training from scratch and often better than more generic time-series baselines.

Its main limitation is also clear. PaPaGei is trained on mostly clinical-grade data. That gives it clean physiology, but it does not fully expose the model to the motion, skin contact shifts, ambient light drift, and missingness that define everyday wearables. That gap is exactly where Pulse-PPG enters.

Pulse-PPG, the first open field-trained wearable PPG foundation model

Paper: Saha et al., "Pulse-PPG: An Open-Source Field-Trained PPG Foundation Model for Wearable Applications Across Lab and Field Settings." Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 2025. arXiv: 2502.01108, DOI: 10.1145/3746027, arXiv DOI: 10.48550/arXiv.2502.01108.

Pulse-PPG is arguably the most important follow-up to PaPaGei because it attacks the most obvious weakness in early PPG foundation models: overreliance on clean clinical data.

The authors, including Mithun Saha, Maxwell A. Xu, Wanting Mao, Sameer Neupane, James M. Rehg, and Santosh Kumar, position Pulse-PPG as the first open-source field-trained PPG foundation model [2]. The core dataset comes from a 100-day field study with 120 participants wearing Fossil Sport smartwatches, yielding roughly 55,000 hours of raw 100 Hz PPG collected in naturalistic conditions [2].

That dataset design matters more than the raw hour count. The model sees all the ugly things wearable PPG usually tries to filter away: motion artifacts, poor contact, ambient light fluctuations, and irregular user behavior. Instead of discarding that variability, Pulse-PPG treats it as the training distribution.

The model uses a ResNet encoder backbone and a contrastive training framework, but with a twist: the authors introduce a motif-based distance function to identify meaningful positives and negatives, rather than relying on naive augmentations alone [2]. In plain language, the method tries to preserve subtle shared structure in noisy pulse windows while avoiding the common failure mode where contrastive learning confuses meaningful physiological differences with artifact differences.

Pulse-PPG is evaluated on both wearable and clinical downstream tasks. The paper highlights transfer to stress classification, activity classification, heart rate regression, sleep disturbance classification, blood pressure regression, and hypertension detection across lab and field settings [2]. The headline finding is that field pretraining improved generalization not just to field tasks, which would be expected, but often to clinical tasks as well. That is a strong result because it suggests that robustness to noise can help the encoder learn more durable physiology, not just artifact tolerance.

If PaPaGei established the category, Pulse-PPG established an argument that many people in wearable health already suspected: for consumer and ambulatory devices, ecological validity of the pretraining corpus may matter as much as total hours.

GPT-PPG, the largest published PPG pretraining corpus

Paper: Chen et al., "Adapting a Generative Pretrained Transformer Achieves SOTA Performance in Assessing Diverse Physiological Functions Using Only Photoplethysmography Signals: A GPT-PPG Approach." arXiv: 2503.08015, DOI: 10.48550/arXiv.2503.08015, OpenReview: https://openreview.net/forum?id=hfgdwxbNOW.

GPT-PPG is the generative extreme of the field. Rather than contrastive pretraining or masked reconstruction, it asks whether a decoder-only GPT architecture can model PPG the way language models model token sequences.

The authors are David Chen, Xiao Hu, and colleagues from Emory University, Georgia Tech, and UCSF [3]. Their training corpus comes from routine ICU monitoring at UCSF and contains more than 200 million 30-second PPG samples, which corresponds to roughly 2.2 million hours of pretraining data, the largest published PPG corpus in the literature at the time [3].

GPT-PPG is offered in multiple model sizes, including 19M, 85M, 345M, and 1B parameter variants [3]. The architecture uses a linear patch embedding front end and standard causal transformer blocks adapted to continuous pulse signals. Conceptually, the model learns to predict future PPG patches from prior context.

This is a very different inductive bias from PaPaGei or Pulse-PPG. Contrastive models ask what windows belong together in representation space. GPT-PPG asks what waveform fragment should come next. That makes it naturally suited to sequence completion, denoising, and generative downstream use.

The paper evaluates the model on a broad range of tasks including atrial fibrillation detection, heart rate estimation, respiration rate estimation, blood pressure estimation, and qualitative denoising behavior [3]. It also explores parameter-efficient fine-tuning, personalization, and mixed-objective fine-tuning that introduces bidirectional reconstruction during adaptation.

The most important takeaway is not that GPT-PPG replaces contrastive encoders. It is that generative pretraining works for PPG at massive scale, and scaling curves appear to matter. The limitation is deployment cost. Even the smaller variants are heavier than most CNN-based encoders, and the full-size generative family is not built for a watch chip. But as a research direction, GPT-PPG broadened the field's imagination about what a PPG foundation model can be.

AnyPPG, ECG-guided pretraining for physiologically grounded representations

Paper: Nie et al., "AnyPPG: An ECG-Guided PPG Foundation Model Trained on Over 100,000 Hours of Recordings for Holistic Health Profiling." arXiv: 2511.01747, DOI: 10.48550/arXiv.2511.01747, code: https://github.com/PKUDigitalHealth/AnyPPG.

AnyPPG is one of the most interesting second-generation PPG foundation models because it changes the pretraining question. Instead of treating PPG as a standalone signal stream, it uses synchronized ECG as physiological supervision.

The authors, led by Guangkun Nie, Xiaocheng Fang, Gongzheng Tang, Yujie Xiao, Jun Li, Bo Liu, Hongyan Li, and Shenda Hong at Peking University and related institutes, pretrain on 109,909 hours of synchronized PPG-ECG recordings from 58,796 subjects, producing about 40 million paired 10-second segments [4]. The corpus is aggregated from MC-MED, PulseDB, MESA, HSP, CFS, MIMIC, and VitalDB-derived resources described in the paper and appendix [4].

The architecture is a dual-branch system. A PPG encoder and an ECG encoder each produce embeddings that are projected into a shared latent space. During pretraining, the ECG branch acts as a physiological teacher. The goal is not to use ECG at inference time, but to align synchronized PPG and ECG representations so that the PPG encoder learns features consistent with underlying cardiac electrical activity [4].

This is a very smart move for PPG. PPG measures peripheral hemodynamics, not electrical activity directly. ECG provides tighter access to rhythm and timing. By aligning to ECG during pretraining, AnyPPG tries to give the PPG encoder access to information that is otherwise only weakly reflected in the optical pulse waveform.

AnyPPG is evaluated on eight downstream PPG datasets spanning wearable and clinical settings, including PPG-DaLiA, UCI-BP, BUT PPG, Gyro-Acc-PPG, WESAD, DeepBeat, Real-World PPG, and WenXinWuYang, and also extends to phenome-wide disease detection [4]. One of the paper's most striking claims is that the learned embeddings support discrimination across 77 circulatory disorders and 230 non-circulatory conditions, with examples such as congestive heart failure, dementia, chronic renal failure, hyperkalemia, and glaucoma [4].

This does not mean PPG alone is suddenly a universal diagnostic modality. It does mean the representation appears to capture more systemic physiology than earlier work assumed. If that result holds up in external validation, AnyPPG could end up being the model that pushes PPG foundation models from benchmark culture toward digital biomarker discovery.

SiamQuality, quality-aware self-supervision before the term became mainstream

Paper: Ding et al., 2024. PMC full text: https://pmc.ncbi.nlm.nih.gov/articles/PMC11334241/.

SiamQuality deserves more attention than it usually gets because it addressed a real problem early: PPG foundation models are only as good as the way they handle low-quality signal.

The paper trains on 36 million 30-second PPG pairs, representing more than 600,000 hours from 21,000 patients [5]. The backbone is a ResNet CNN, and the self-supervised strategy is explicitly inspired by SimSiam-style training, but adapted so that signal quality is not treated as something to be scrubbed away before learning [5].

The key idea is quality pairing. PPG data in the real world has a long tail of poor-quality segments, and a standard contrastive setup can over-penalize them or collapse them into nuisance variation. SiamQuality instead uses a pairing and representation-learning strategy designed to preserve useful information even when segments are noisy. That is why the model is often cited as an important bridge between clean-clinical pretraining and field-robust pretraining.

The authors report fine-tuning on six downstream tasks, with state-of-the-art or near-state-of-the-art results across all of them. The headline numbers highlighted in the paper are particularly strong on respiration rate estimation and atrial fibrillation detection, with improvements of 43% and 7% respectively over prior state of the art [5].

In hindsight, SiamQuality looks like an early argument for what Pulse-PPG later made explicit: robustness is not just a downstream engineering step. It should be built into pretraining.

BIOT, a biosignal foundation model that includes PPG

Paper: Tang et al., "BIOT: Biosignal Transformer for Cross-Data Learning in the Wild." NeurIPS 2023. arXiv: 2305.10351, DOI: 10.48550/arXiv.2305.10351, NeurIPS proceedings: https://openreview.net/forum?id=c2LZyTyddi.

BIOT is not a PPG-first model, but it belongs in any serious review of PPG foundation models because it helped establish the broader design space for cross-dataset biosignal pretraining.

Tang and colleagues introduced a transformer-based framework with a unified biosignal tokenization strategy designed to handle heterogeneous physiological data sources across modalities such as ECG, EEG, EMG, and PPG [6]. The central problem BIOT tackles is one that every physiological foundation model faces: biosignals vary wildly in sampling rate, channel count, amplitude scaling, and acquisition context.

The reason BIOT matters for PPG is not that it is the best model for wrist pulse signals. It is that it proved a transformer can be taught a common language for biosignals without being tied to a single collection protocol or modality. For teams building multimodal wearables or shared encoders across cardiac and neural data, BIOT is still a reference point.

If your goal is best-in-class transfer on PPG-only tasks, BIOT is usually outperformed by PPG-specialized encoders. If your goal is a broader physiological model stack, it remains relevant.

Apple's wearable model, the strongest proprietary benchmark

Paper: Abbaspourazad et al., "Self-Supervised Learning from Large-Scale Wearable Sensor Data for Multi-Task Biosignal Representation." arXiv: 2312.05409, DOI: 10.48550/arXiv.2312.05409, Apple ML Research page: https://machinelearning.apple.com/research/large-scale-training.

Any honest review of PPG foundation models has to include Apple, even though the model is proprietary.

Abbaspourazad and colleagues describe self-supervised pretraining on Apple Watch data curated from the Apple Heart and Movement Study, including about 141,000 participants over roughly 3 years for the PPG corpus [7]. The model uses an EfficientNet-style 1D CNN encoder, a projection head, and a momentum-optimized contrastive learning framework with participant-level positive pair selection, stochastic augmentations, and a regularized InfoNCE objective [7].

This participant-aware pairing is a subtle but important idea. In wearable data, segments from the same person contain stable latent factors such as vascular tone, device fit, habitual activity patterns, and demographic structure. Using that information as a pretraining signal helps the model learn persistent subject-level structure rather than just local beat morphology.

Apple reports that the resulting embeddings encode demographics and health conditions and generalize across both PPG and ECG modalities [7]. The paper is one of the first to show, at truly consumer scale, that wearable biosignal pretraining can work outside the clinic.

Because the data and weights are not public, the model functions more as a strategic benchmark than a practical research baseline. But it likely influenced the entire field. Pulse-PPG, SiamQuality, and later multimodal PPG papers all read differently once you understand that proprietary wearable labs were already operating at this scale.

MOMENT, a general time-series foundation model that includes physiological data

Paper: Goswami et al., "MOMENT: A Family of Open Time-series Foundation Models." ICML 2024. arXiv: 2402.03885, DOI: 10.48550/arXiv.2402.03885.

MOMENT is not a dedicated PPG foundation model, but it is often used as a baseline because it is a serious open-source time-series foundation model trained on the Time Series Pile, a large collection of public datasets spanning domains such as healthcare, engineering, and finance [8].

The model uses masked time-series modeling and is designed to transfer across forecasting, classification, anomaly detection, and imputation tasks [8]. PPG and other physiological signals are part of the broader healthcare slice of the corpus, though not the model's exclusive focus.

Why include MOMENT here? Because in many benchmark sections, it is the generic baseline a PPG-specific model has to beat. If a PPG foundation model cannot outperform a broad time-series FM on downstream pulse tasks, its specialization is not buying much.

So far, dedicated PPG models usually do beat it, especially on morphology-sensitive health tasks. That is a useful result in itself. It suggests PPG is not just another time series. The modality has enough structure, artifact profile, and physiological specificity to reward bespoke pretraining.

The multimodal physiological supervision model, a strong 2025 result

Paper: "A robust PPG foundation model using multimodal physiological supervision." OpenReview 2025. OpenReview: https://openreview.net/forum?id=ZmGfCj1n2P.

One of the strongest newer entries is the 2025 OpenReview paper often described informally as the multimodal physiological supervision PPG model [9]. The core idea is elegant: use accompanying ECG and respiratory signals in ICU data to guide PPG pretraining, but keep the model unimodal at inference time.

This avoids a common problem in multimodal learning. If you force the final system to depend on multiple sensors, you make deployment harder. This paper instead uses multimodal structure only during representation learning. ECG and respiration help determine which PPG segments should be treated as similar or dissimilar during contrastive pretraining.

The headline result is striking: with 3x fewer subjects than some state-of-the-art approaches, the model reports improvements of up to 36% in classification and 42% in regression on 14 of 15 downstream tasks [9]. Those tasks include field-facing endpoints such as stress and heart rate prediction.

This paper matters because it sharpens a lesson that AnyPPG also points toward: PPG pretraining gets better when it is physiologically supervised, not just statistically scaled.

Other emerging models worth watching

The field is already broader than the few named models most people cite.

Wavelet-Driven Masked Multiscale Reconstruction (MMR)

OpenReview's Wavelet-Driven Masked Multiscale Reconstruction for PPG Foundation Models pretrains on about 17 million unlabeled 10-second segments from roughly 32,000 smartwatch users [10]. Instead of reconstructing raw waveform windows, it masks and reconstructs wavelet multiresolution coefficients, forcing the encoder to learn across time and frequency scales.

This is a smart fit for PPG because physiologically meaningful information sits at multiple scales at once: beat morphology, respiratory modulation, and longer vascular trends. The paper reports that MMR matches or outperforms open-source PPG FMs on 11 of 13 tasks [10]. It still needs more external validation, but conceptually it is one of the most interesting new directions.

ProtoMM and pulse-motion foundation models

The OpenReview paper Leveraging Shared Prototypes for a Multimodal Pulse Motion Foundation Model proposes a prototype-based self-supervised framework that aligns PPG and accelerometry through a shared dictionary rather than CLIP-style negatives [11]. This is especially relevant for wearables, where motion is both nuisance and context.

I would not classify ProtoMM as a pure PPG foundation model in the same category as PaPaGei or Pulse-PPG, because the premise is multimodal from the start. But it is very relevant to the next generation of wearable foundation models, where PPG, IMU, skin temperature, and EDA may be trained together.

PPG-Distill

PPG-Distill is not a foundational pretraining method by itself, but it is important operationally because it attacks the deployment bottleneck [12]. The paper uses teacher-student distillation tailored to PPG, including prediction-level, feature-level, patch-level, rhythm, and morphology distillation. It reports up to 21.8% student performance improvement, 7x faster inference, and 19x lower memory use on heart rate estimation and AF detection [12].

That matters because the wearable future of foundation models depends not just on representation quality, but on whether the resulting models can run on edge hardware.

Pretraining methods explained: the main schools of thought

Across the current PPG foundation model literature, four pretraining families dominate.

1. Contrastive learning

This is the most common approach in PaPaGei, Pulse-PPG, Apple's wearable model, and several multimodal variants. The idea is to pull semantically related segments together in embedding space and push unrelated segments apart.

What counts as "related" differs by paper:

- Different augmented views of the same segment

- Segments from the same person

- Morphologically similar pulse patterns

- Synchronized segments with similar ECG or respiratory context

Contrastive learning works well when pair selection is meaningful. It works poorly when positives and negatives are naive. In PPG, that is a big deal because a noisy segment may still contain the right physiology.

2. Masked reconstruction

MOMENT and newer wavelet-driven PPG models use masked modeling. Hide part of the input, then train the model to reconstruct it. The advantage is that the model must learn local and global structure without needing explicit positive and negative pairs.

For PPG, masked reconstruction is especially appealing when done in a transformed representation, such as wavelet space, because that aligns better with multiscale physiology than reconstructing raw samples alone.

3. Autoregressive generation

GPT-PPG is the clearest example. Predict the next patch or segment from prior context. This is closest in spirit to language modeling.

The benefit is that it naturally learns sequence continuity, rhythm, and generative structure. The downside is heavier models and more expensive inference.

4. Physiological guidance and multimodal alignment

AnyPPG and the OpenReview multimodal-supervision paper show this best. Use ECG, respiration, or other synchronized signals as supervision during pretraining, but keep inference unimodal on PPG.

This may prove to be the most promising long-term strategy for clinical-grade models because it injects mechanistic structure into the representation. It tells the encoder not just what pulse morphology looks like, but what physiological states it should line up with.

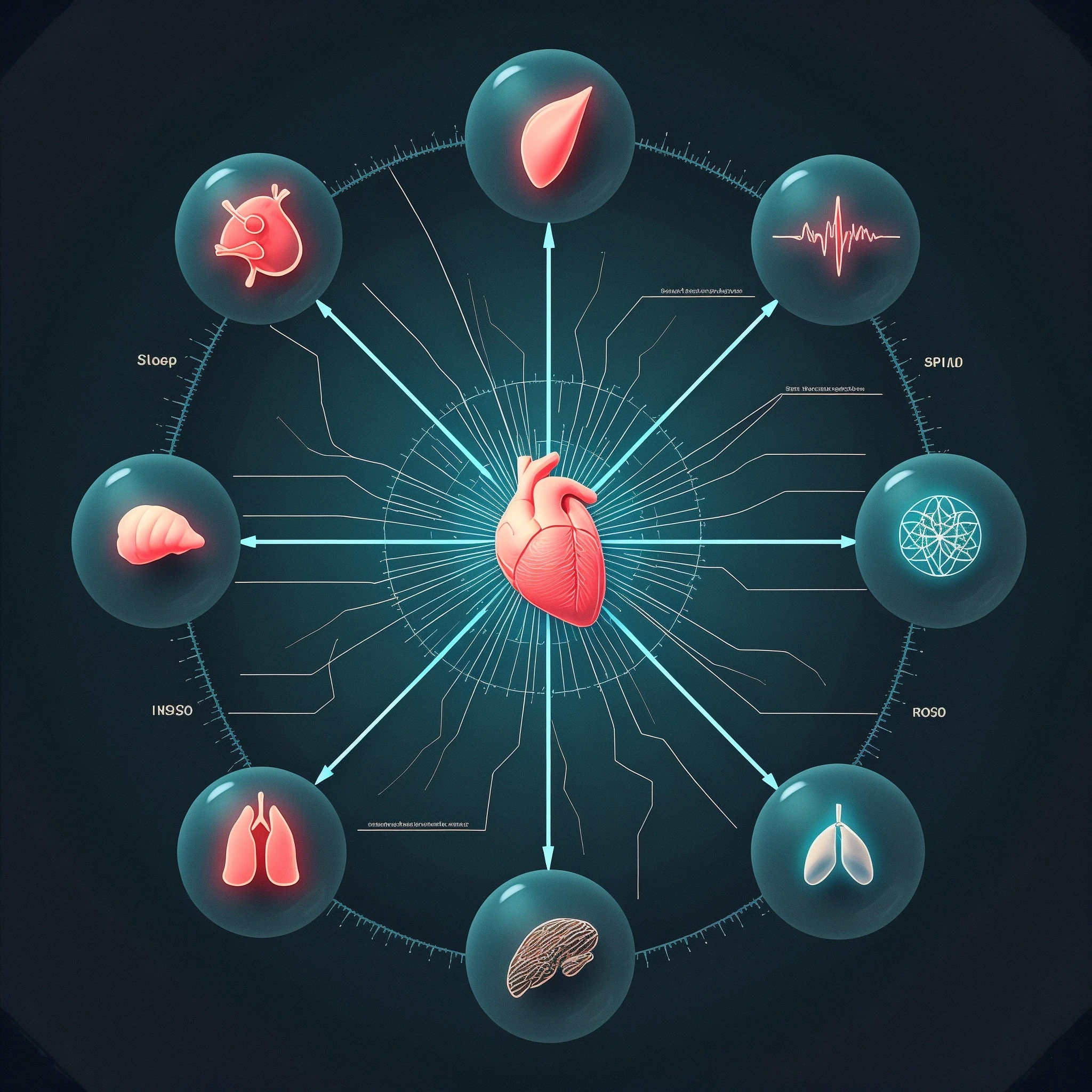

What downstream tasks are these models actually good at?

The benchmark suite is broad now, and that is one sign the field is maturing. Across papers, the recurring downstream tasks are:

- Heart rate estimation

- Respiration rate estimation

- Atrial fibrillation and arrhythmia detection

- Blood pressure estimation and hypertension classification

- Stress classification

- Activity classification

- Sleep quality, disturbance, or stage-related tasks

- Signal denoising or waveform reconstruction

- Phenotype and disease screening

The strongest and most reproducible wins so far are on tasks where PPG is already known to be informative: heart rate, pulse interval variability, blood pressure proxies, AF screening, and stress-related autonomic inference. More speculative disease-screening claims, especially outside cardiovascular domains, are exciting but should still be read carefully.

If you need a clinical framing of one of the best-established downstream applications, see our article on PPG-based atrial fibrillation screening. For the data bottleneck these models are trying to solve, see public PPG datasets and benchmarks.

What this means for the wearable health industry

For the wearable industry, PPG foundation models change the stack.

Historically, most wearable vendors shipped a handful of hand-tuned pipelines: one for heart rate, one for HRV, maybe one for SpO2, maybe one for stress. Each feature required its own data program, model, and validation effort. Foundation models make it possible to treat the PPG encoder as a platform asset.

That has major implications:

- New downstream features can be launched with far less labeled data.

- Personalization becomes easier because you fine-tune a strong prior rather than start from scratch.

- Cross-device transfer becomes more realistic if the pretraining set is large and diverse enough.

- Robustness to field noise can be learned upstream instead of patched in later.

There is also a hardware angle. The best foundation models still depend on the quality of the signal they receive. Clinical-grade PPG platforms and carefully engineered wearables are not made obsolete by better models. In some ways, they become even more valuable. Better sensors create richer pretraining corpora, and richer corpora produce better models.

That is the Sensor Bio angle in one sentence: clinical-grade data infrastructure is a foundation-model moat.

The teams that can collect synchronized, longitudinal, multi-context PPG at scale, ideally with paired ECG, blood pressure, respiration, and outcome labels, will shape the next generation of wearable health AI.

Where the field is headed

Three trends look especially important.

First, wearable-native pretraining will keep growing. Pulse-PPG and the new smartwatch-scale masked models already show that field data is not just a noisy version of clinical data. It is a distinct domain that deserves its own pretraining strategy.

Second, multimodal physiological supervision will likely become standard. ECG-guided and respiration-guided objectives are a clean way to make PPG encoders more physiologically grounded without requiring multimodal sensors at deployment.

Third, the bottleneck will shift from pretraining to evaluation and clinical validity. The field now has enough models. What it still lacks are shared external benchmarks, prospective validation, device-diverse replication, and clearer standards for when a representation is truly general versus just broadly benchmarked.

FAQ

What is a PPG foundation model?

A PPG foundation model is a large pre-trained neural network that learns reusable representations from large corpora of unlabeled or weakly labeled photoplethysmography signals. Instead of training a separate model for every task, the pretrained encoder is adapted to downstream problems like heart rate estimation, AF detection, blood pressure estimation, stress classification, and sleep analysis.

Which is the first open PPG foundation model?

PaPaGei is generally recognized as the first open foundation model built specifically for PPG signals. It was trained on more than 57,000 hours of public PPG data from VitalDB, MIMIC-III, and MESA and published with open access in the ICLR 2025 cycle [1].

Which PPG foundation model has the largest training corpus?

Among published PPG-specific models, GPT-PPG reports the largest corpus, more than 200 million 30-second PPG samples, or roughly 2.2 million hours of data [3]. The tradeoff is that generative transformer models are heavier than CNN-based encoders for deployment.

Which model is best for wearable data collected in the real world?

Pulse-PPG is the clearest open-source model optimized for real-world wearables because it was pretrained on roughly 55,000 hours of field-collected smartwatch PPG from a 100-day study. Its main contribution is robustness to the artifact profile seen outside the clinic [2].

Why use ECG-guided pretraining for PPG?

ECG gives direct access to electrical cardiac timing and rhythm, while PPG measures peripheral hemodynamics. ECG-guided pretraining helps a PPG encoder learn representations that are more tightly linked to underlying cardiovascular physiology, even if the deployed model uses only PPG at inference time. AnyPPG is the clearest example of this approach [4].

Are generic time-series foundation models enough for PPG?

They are useful baselines, but usually not sufficient if your goal is best performance on pulse-wave tasks. Models like MOMENT can transfer reasonably well, but specialized PPG models generally do better because they are trained to respect pulse morphology, physiological noise, and device-specific artifact structure [1][2][8].

References

- Pillai S, Ding G, Spathis D, Kawsar F, Malekzadeh M, et al. PaPaGei: Open Foundation Models for Optical Physiological Signals. ICLR 2025. arXiv:2410.20542. DOI: https://doi.org/10.48550/arXiv.2410.20542. OpenReview: https://openreview.net/forum?id=kYwTmlq6Vn

- Saha M, Xu MA, Mao W, Neupane S, Rehg JM, Kumar S. Pulse-PPG: An Open-Source Field-Trained PPG Foundation Model for Wearable Applications Across Lab and Field Settings. Proc ACM Interact Mob Wearable Ubiquitous Technol. 2025;9(3). arXiv:2502.01108. DOI: https://doi.org/10.1145/3746027. arXiv DOI: https://doi.org/10.48550/arXiv.2502.01108

- Chen D, Hu X, et al. Adapting a Generative Pretrained Transformer Achieves SOTA Performance in Assessing Diverse Physiological Functions Using Only Photoplethysmography Signals: A GPT-PPG Approach. arXiv:2503.08015. DOI: https://doi.org/10.48550/arXiv.2503.08015. OpenReview: https://openreview.net/forum?id=hfgdwxbNOW

- Nie G, Fang X, Tang G, Xiao Y, Li J, Liu B, Li H, Hong S. AnyPPG: An ECG-Guided PPG Foundation Model Trained on Over 100,000 Hours of Recordings for Holistic Health Profiling. arXiv:2511.01747. DOI: https://doi.org/10.48550/arXiv.2511.01747. Code: https://github.com/PKUDigitalHealth/AnyPPG

- Ding G, Lee RJ, Rudin C, Hu X. SiamQuality. 2024. Full text: https://pmc.ncbi.nlm.nih.gov/articles/PMC11334241/

- Tang W, et al. BIOT: Biosignal Transformer for Cross-Data Learning in the Wild. NeurIPS 2023. arXiv:2305.10351. DOI: https://doi.org/10.48550/arXiv.2305.10351. OpenReview: https://openreview.net/forum?id=c2LZyTyddi

- Abbaspourazad H, et al. Self-Supervised Learning from Large-Scale Wearable Sensor Data for Multi-Task Biosignal Representation. arXiv:2312.05409. DOI: https://doi.org/10.48550/arXiv.2312.05409. Apple ML Research: https://machinelearning.apple.com/research/large-scale-training

- Goswami M, Szafer K, Choudhry A, Cai Y, Li S, Dubrawski A. MOMENT: A Family of Open Time-series Foundation Models. ICML 2024. arXiv:2402.03885. DOI: https://doi.org/10.48550/arXiv.2402.03885

- A robust PPG foundation model using multimodal physiological supervision. OpenReview, 2025. https://openreview.net/forum?id=ZmGfCj1n2P

- Wavelet-Driven Masked Multiscale Reconstruction for PPG Foundation Models. OpenReview, 2025. https://openreview.net/forum?id=tVu1zfdbhu

- Leveraging Shared Prototypes for a Multimodal Pulse Motion Foundation Model. OpenReview, 2025. https://openreview.net/forum?id=CtcKEAojoE

- Ni J, et al. PPG-Distill: Efficient Photoplethysmography Signals Analysis via Foundation Model Distillation. arXiv:2509.19215. DOI: https://doi.org/10.48550/arXiv.2509.19215